The organizations winning on AI right now share something simpler and more replicable than a sophisticated technology strategy. We have watched it play out consistently across our customers: they picked one problem, deployed against it with purpose, and let the results build the case for everything that followed. Budget and ambition have surprisingly little to do with it. Three keynote speakers at Unleash 2025 arrived at the same argument from entirely different disciplines, and the convergence is hard to ignore.

Inaction Now Compounds Faster Than Experimentation

Peter Hinssen opened with a framing that is hard to disregard once you hear it: the cost equation for AI has flipped. Where the cost of experimentation was once the primary variable organizations had to manage, it is now the cost of inaction that compounds in real time. AI capability scaled from autonomous 4-minute tasks in 2023 to autonomous 10-hour tasks in 2026. Big Tech capital expenditure for this year exceeds the combined inflation-adjusted cost of the Manhattan Project and Apollo Program. One Silicon Valley startup cited in the keynote now operates with 300 employees, 30 of whom are human and 270 of whom are AI agents, and this is not a thought experiment but an operating model running in production at companies competing for the same talent and serving the same customers as organizations still building internal alignment around whether to act.

Hinssen’s practical argument is that organizations must innovate when they can, not when they are forced to, because by the time the pressure is undeniable, the window for low-cost experimentation has likely already closed.

AI Investments Stall When Tools Outpace Work Redesign

Deloitte’s research across 9,000 respondents in 70 countries points to work design, not technology, as the primary reason AI investments lose momentum. Only 14% of companies are good at designing work that leverages both human and AI capabilities together, while 59% take a technology-first approach, buying tools before redesigning the workflows those tools are meant to change. That gap is precisely where AI investments stall, not because the technology fails, but because the work was never rebuilt around it.

The culture numbers are just as stark: 80% of workers reported believing their colleagues are performing what Deloitte calls “AI theater,” producing polished deliverables with no real AI substance underneath. The organizations generating real results are not necessarily the ones with better technology. They are the ones that treated work redesign as a leadership decision first and a technology decision second, a distinction that shows up in the outcomes. It is also the pattern we hear most often when organizations come to us after a stalled deployment, teams that bought tools before anyone redesigned the work around them.

Psychological Safety Is an Implementation Requirement

Adoption is a cultural design problem, and the data from Unleash makes that structural. Amy Edmondson’s framework from Harvard landed differently when held next to the Deloitte findings. The organizations where employees perform for the tool rather than with it are operating, almost by definition, in environments where the incentive is to appear compliant with AI adoption rather than experiment with it. Edmondson’s Learning Zone requires high psychological safety and high performance standards simultaneously, and her research on intelligent failure draws a clear line between organizations that prevent the wrong kinds of failure and those that accidentally prevent all of it.

For HR leaders managing AI rollouts, the practical implication is that creating the conditions for employees to experiment honestly, ask what they do not understand, and report what is not working is not a soft consideration that belongs at the end of the implementation plan. It is the deployment architecture. The organizations we work with that have the smoothest rollouts are almost always the ones that set this up deliberately, treating launch as a learning environment rather than a performance moment.

Real Returns Start With One Problem,

Not a Strategy

Held together, these three frameworks describe the same phenomenon from different directions. The organizations with real AI momentum did not start with transformation roadmaps. They started with one workflow where the problem was specific, the success criteria were clear, and the timeline was short enough to produce results before organizational skepticism could harden into structural resistance.

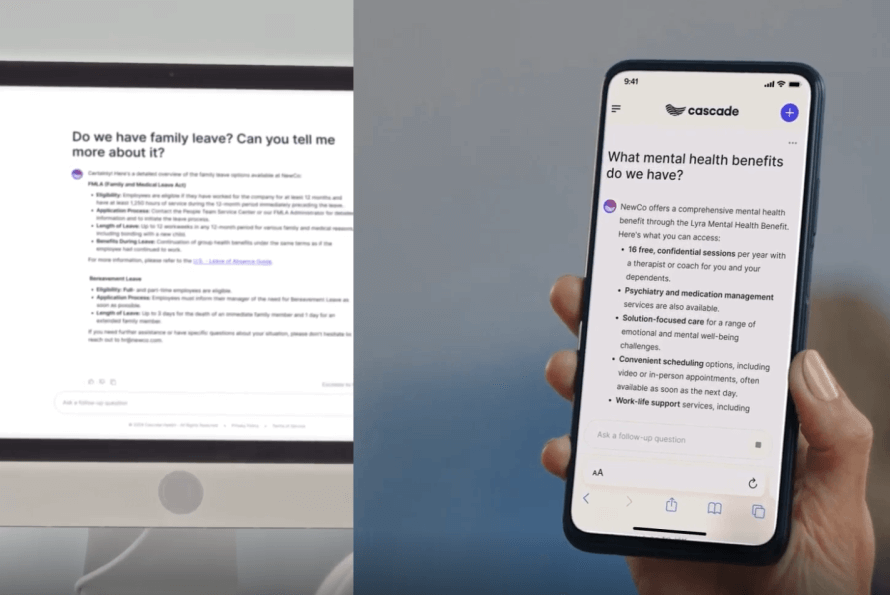

The pattern holds across very different organizational contexts. A professional services company growing at 30% year over year through M&A deployed AI agents across HR support for a workforce spanning 48 states and every Canadian province, starting with the workflows generating the most friction rather than attempting to solve everything at once. A global services company navigating significant acquisition activity took a similar path, beginning with employee Q&A in the channels people already used and treating each deployment as a learning environment rather than a performance moment. In both cases, starting somewhere specific rather than waiting for a complete strategy was what created the results that made the next investment possible.

For leaders still building the internal case for AI investment, Unleash answered the question that matters: not whether to act, but where to start first. The board conversation shifts when the first result exists, and that result requires a decision about a single workflow, not a transformation roadmap.

The organizations generating returns didn’t start with a roadmap. They named one workflow, defined what success looked like, and moved. If you’re trying to figure out which one that should be for your organization, that’s the conversation we’re built for. Most of our customers are live within four weeks of making that call.